Imagine your favorite musicians from throughout all of human history (paging Kurt Cobain and Layne Staley!) getting together in the same room. Together, they are free-flowing and improving melodies on top of chord progressions with beautiful and surprising results. The tune is familiar enough to tap your foot along but multilayered enough to be different and completely thrilling.

Being a part of the Project Passage experience was exactly this. Project Passage was an “OCLC and Friends” metadata jam session. (“OCLC and Friends” would make a great band name, am I right?) At the conclusion of the Project Passage experience, I was convinced that linked data and libraries have a bright future together.

From the Trough of Disillusionment to the Slopes of Enlightenment

When talking about emerging trends, the technology industry uses the Gartner Hype Cycle as a yearly roadmap to navigate through the rosy-hued Peaks of Inflated Expectation to the aspirational Slopes of Enlightenment. Libraries and linked data are entwined together trekking relentlessly through disillusionment towards productivity.

Librarians are not strangers to this cycle. Together, we’ve broken through change barriers and slogged through bouts of professional humdrum plenty of times before. However, the emotions surrounding libraries and linked data take on an extra special fever-pitch because we are discussing an international shared mission, evaluating a new library service, and re-envisioning the very fabric of the Bibliographic Universe. Linked data, as a monumental development with implications for the future of library resource discoverability, has a lot riding on it. We know it. OCLC knows it. Your library vendors know it. Your catalogers and metadata wranglers know it.

Seven lessons, six constants, five changes, and four webcomics

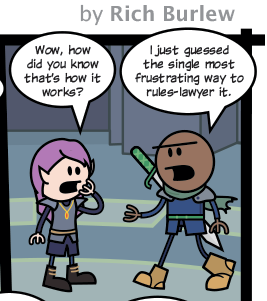

Project Passage’s seven lessons (see page 59 from the OCLC Research report) pointed out how much our current cataloging and metadata practices will have to change within a Wikimedia-like object/relationship ecosystem. But … many foundational principles remain the same. Let’s walk through all that at a very high level, with some help from our favorite webcomics, Randall Munroe, Mya Lixian Gosling, and Rich Burlew, to illustrate things for us.

Lesson one. The building blocks of a Wikibase item entity can be translated into a straightforward procedure for creating structured data with a precision that exceeds the expressive power of current library standards. But can this data be used to build the context required to discover and interpret a resource?

Lesson one. The building blocks of a Wikibase item entity can be translated into a straightforward procedure for creating structured data with a precision that exceeds the expressive power of current library standards. But can this data be used to build the context required to discover and interpret a resource?

What changes? Project Passage pointed out that we need enhancements to current library ontologies to address gaps in the models and that our best practices on description enable the identification of unique resources.

What’s constant? Some stuff is harder to catalog than other stuff and so it is with Wikimedia’s fingerprint-y minimal resource description “core-ish-ness.”

Lesson two. User-driven ontology design enabled by the Wikibase platform is a good thing. But how should it be managed for long-term sustainability?

Lesson two. User-driven ontology design enabled by the Wikibase platform is a good thing. But how should it be managed for long-term sustainability?

What changes? Project Passage showed us just how much can be gained by incorporating entities from outside the library domain. What remains to be seen is how a more democratically governed vocabulary will affect ontology management.

What’s constant? We will still need ontology governance and rules on how to catalog. Potentially even more rules and standards than we have now.

Lesson three. The Wikibase platform, supplemented with OCLC’s enhancements and stand-alone utilities, represents a step forward in enabling librarians to obtain feedback as they work.

Lesson three. The Wikibase platform, supplemented with OCLC’s enhancements and stand-alone utilities, represents a step forward in enabling librarians to obtain feedback as they work.

What changes? Nothing. Description boils down to discovery, discovery, discovery. So how do we define “good enough” data?

What’s constant? If they can’t find it, it ain’t good enough. We know that resource description is about the user experience. At the end of the day, our tasks boil down to discoverability.

Lesson four. Robust tools are required for local data management.

Lesson four. Robust tools are required for local data management.

What changes? We are going to save time and effort by not having to create comprehensive descriptions since factual statements are added to a prepopulated graph. So, trust me, for a while this is going to feel really weird.

What’s constant? Querying existing bibliographic and authority data has always helped catalogers understand “what is already out there,” and subsequently, add to it. We will just be doing this task more efficiently by a singular factual statement on entities/relationships rather than by endlessly populating strings over and over on a record-by-record basis.

Lesson five. To populate knowledge graphs with library metadata, tools that facilitate the reuse and enhancement of data created elsewhere are recommended.

What changes? The data may come from a place other than the “typical” library sources. Differentiating between authors, corporate bodies, and topics is about to become a whole lot more important.

What’s constant? The reuse and enhancement of data, concepts of authoritativeness and trustworthiness, and the reduction of duplicates are enabled by the crowdsourcing of library work and reliant on functionality within particular systems. Best yet, this ecosystem will actually be able to use the incredible, robust details we already collect on entities. Catalogers need to reconcile search results to identify items in hand with existing descriptions.

Lesson six. The pilot underscored the need for interoperability between resources, both for ingest and export.

What changes? Nothing. Libraries have always understood the need to work together, create innovative low-cost sustainable solutions, and create interoperability. Our current library copy cataloging and sharing model will need to continue to evolve and create new paths for library cost savings, just as it has done over the past 50 years.

What’s constant? Not a lot. Interoperability in data, interoperability in systems, and synchronization of data stores will change. The targets for ingest and export of that data will remain the same. But the vocabularies will need updating as technologies change and to reflect new social conventions.

Lesson seven. The descriptions created in the Wikibase editing interface make the traditional distinction between authority and bibliographic records disappear.

What changes? Everything. The traditional walls between authority work and bibliographic description are coming down, folks.

What’s constant? Catalogers will have a major role in reconciling the Bibliographic Universe with all the other ones, including the “real” one that you and I work, breathe, and sleep in. Catalogers will continue to act as a conduit for data interpretation and build important context around the factual statements surrounding entities.

[bctt tweet=”We, catalogers and metadata wranglers, are writing the next chapter on library resource discoverability.”]

You should really read the whole report

The lessons from above are taken from “Creating Library Linked Data with Wikibase: Lessons Learned from Project Passage.” It’s 89 pages. I know, because I helped write it.

89 pages. That’s not long. C’mon. We’re library workers. Anything under 300 pages is a lark, right? Plus, very little of that is big chunks of text. Like linked data itself, we designed it to be consumable in chunks. You can read the text without the footnotes. Or the footnotes without the text. You can skip around or just look at the pictures and still learn something. ?

You should read it because it highlights some very exciting stuff: real-life use cases for library resources created by real-life catalogers and library metadata wranglers using Wikibase. We got our hands dirty in data by creating and maintaining bibliographic data in an entity-relationship ecosystem.

We, catalogers and metadata wranglers, are writing the next chapter on library resource discoverability. Identifying “the entities that matter” will drive the construction of the future Bibliographic Universe. Building that house, together, gives us a deep sense of stewardship over the next generation’s worldwide union catalog of entities and relationships.

All webcomics used with direct permission or, in the case of xkcd, appropriate attribution.

Note from OCLC: The work done by kalan and the other Project Passage partners has been absolutely instrumental in helping OCLC move our linked data strategy forward. As a result of that project and other work done by OCLC staff, members and partners throughout the profession, the Andrew W. Mellon Foundation has recently awarded OCLC a $2.436 million grant to develop a shared “Entity Management Infrastructure” that will support linked data management initiatives underway in the library and scholarly communications community. The Mellon grant funding represents approximately half of the total cost of the Entity Management Infrastructure project. OCLC is contributing the remaining half of the required investment. You can read more about the grant award here. Our thanks to kalan and all our other partners in the linked data community!

Share your comments and questions on Twitter with hashtag #OCLCnext.